Veves are drawings whereby the process of drawing the picture will invoke the name of a spirit or a gods (Loas). This project is about invoking music from the process of drawing.

Video:

- Youtube http://www.youtube.com/watch?v=jzeWBmdG-j8

- Videofile (50mb) http://softwareprocess.es/y/y/veve-demo.ogg

Audio:

- Ogg file (5mb) http://softwareprocess.es/y/veve-demo-audio.ogg

- Ogg file (3mb) http://softwareprocess.es/y/lixin-veve.ogg (a different composition)

This software I have created tracks what you draw (based on webcam input, I also used a white board with some dry erase markers). Your drawing is stored as a temporal bitmap which is then traversed temporally. This traversal produces a path and that path is coverted into music. Usually it acts like a sequencer. A jagged path will have lots of sub paths, it'll sound staccato, where as a smooth path will not. The different angles in a path determine if it is a path vertex or not.

Little lines and dots get turned into short flute bursts or drums, while longer lines and larger objects become long duration flute tones.

To learn more about Veve's go here: http://en.wikipedia.org/wiki/Veve To learn more about Vodou or Voodoo go here: http://en.wikipedia.org/wiki/Haitian_Vodou

The idea is originally from Matthew Skala who has considerable multicultural expertise in these sorts of things: http://ansuz.sooke.bc.ca/

Here's the software if you want it, it is very Linux specific. It might work on other unices but I doubt it.

http://github.com/abramhindle/magic-sigils

You'll need csound: http://csounds.com/

Before I offend anyone, I'm not drawing veves, it is just that idea of a drawing producing an effect which is how it relates to veves.

This is a first stab and the UI is pretty bad. Also it is hard to tell what is happening because it is pretty abstract.

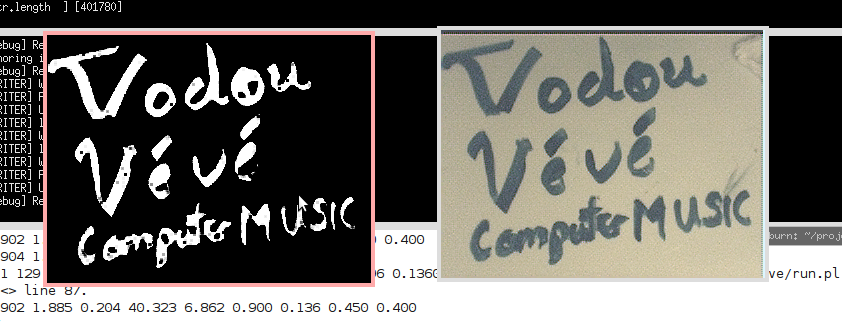

I read from the webcam, this is the color display, my program, written in OCaml, reads this bitmap, then equalizes and thresholds the image, only the dark stuff because the positive content. This technique means images of my hand have a bit less of an effect. Then we sum the positive pixels of this cleaned up bitmap with the historical image I have and that is displayed as the black and white image. This means that things that stay the same are persistent where as things that don't stay the same fade out. I think walk through bitmap based on the time that it was changed, find the centroid of that event, then move onto the next event. I build up a list of centroids, these become my path. I simplify the path by removing intermediate nodes which do not modify the angle. Then I send this path out and decide how to turn it into music.

To turn a path into music, I walk the path calculating its vectors and angles and magnitude. These are then mapped to the music. Magnitude is mapped to duration, but short duration also map to drum sounds. Angles modify pitch. This is just arbitrary, you can do a lot more and potentially make it seem more like the drawing, but the drawing has no inherit musicality so it is up to me to do something about it.

Notes I wrote before I attempted this

The idea is that pixels are ageing. We have 2 kinds of pixels, positive pixels (part of the drawing /black) and negative pixels (part of empty space /white). We get these black and white screen-shots all the time. Snapshots/screen-shots are just positive and negative. But these effectively become age masks to our age height-field.

We take the snapshot and we weight the positive and negative values. Maybe black is +1 and white -2. So that means for each snapshot we keep applying this mask to the ageing height-field image.

The assumption is that if say my hand were to cover up part of a drawing this wouldn't have much of an effect unless it was more permanent. So temporary interruptions de-age some parts of the height-field, but probably not enough to care! What it does mean is that old positive parts that still exist will continue to grow and increase in age, while the parts that get erased get decayed.

I suspect there will be a problem with very old negative spaces?

What is neat about this is it gives us thresholding, we can now step through time following this manifold down. Imagine taking cross sections at each step, that would be the direction of the drawing?

Think of the age image as a height-field and think of the water level slowly dropping where it starts from the top. A manifold basically following down.

A sample sequence might with +1/0

+0 +0 +0 +0 +1 +0 +0 +0 +2 +0 +0 +0 +3 +0 +0 +0 +4 +0 +0 +0 +0 +0 +0 +0 +0 +0 +0 +0 +0 +1 +0 +0 +0 +2 +0 +0 +0 +3 +0 +1 +0 +0 +0 +0 +0 +0 +0 +0 +0 +0 +0 +0 +0 +0 +1 +0 +0 +0 +2 +0

This means we can just play back the drawing! Although we miss crossovers.

This also allows us to vectorize the drawing. How? take the centroid of the new step every-time. For a certain time slice, take the centroid of the new stuff and compare it with the previous centroid, this should give a vector! All these vectors might be really nice and we keep simplifying if necessary.

Possible instruments would be little flute sounds, granular synthesis, could mess around with the timings and make it sort of cloud-like.

Web-cam would work, also we could make a SDL drawer, or steal some paint program and send changes along.

Possible methods of vector simplifying are to join vectors if they angle is pretty similar. Only keep those transitions which are more than 10 degrees or so.

This seems great because it has time dependence and order, as well as repetition. One could cycle through, or to make a less obvious transition bounce back and forth.

Another expansion to the idea, track motion, the positive and negative are simply differences between frames! negative is everything else, positive is what changes. We'd get interesting shapes/manifolds that way, and if we had the centroid tracking, then we'd have gestures!